The main data product of the GALAH survey are stellar parameters and elemental abundances for the stars we observe. Over time, the methods by which we do this have changed and evolved. In particular, we made use of The Cannon for Data Release 1 and Data Release 2, changed to Spectroscopy Made Easy for Data Release 3, and now we are using SME together with neural networks for Data Release 4. For a given data release, it is important to consult the accompanying paper for the description of the methods.

On this page we give a overview of the method as used for GALAH DR4. See Buder et al. (2021) for all the details of the data analysis.

Data Release 4 Analysis

Change in data analysis from Data Release 3

There are three main changes to the analysis methods for DR4 compared to DR3.

- Using a neural network for rapid interpolation of synthetic spectra to various combinations of stellar parameters and abundances, trained on synthetic spectra produced with SME.

- Simultaneous fitting of all stellar parameters and elemental abundances.

- A three-phase process for the determination of stellar parameters and elemental abundances: first a pure spectroscopic analysis for individual observations of stars, then multiple observations are coadded and all spectra are reanalysed folding in photometric and astrometric information, then a post-analysis scan for signs of binarity, emission lines, and interstellar absorption.

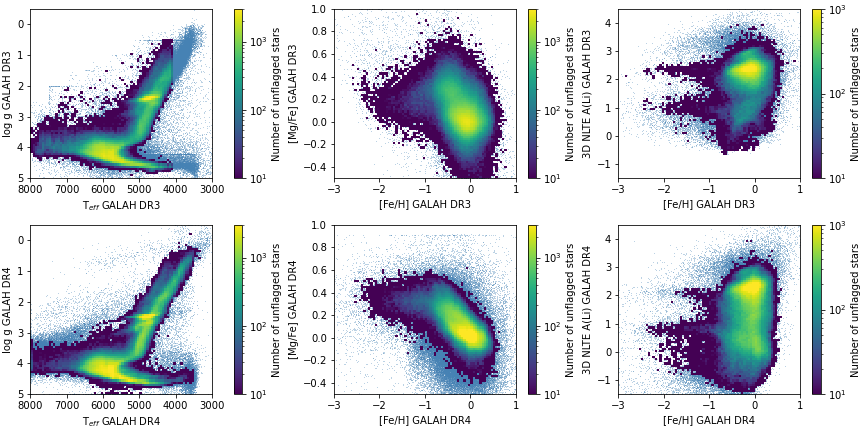

Below is a comparison of GALAH DR3 (upper panels) and GALAH DR4 (lower panels, this release). The smooth light blue background indicates all measurements, whereas the colormap shows the number of unflagged measurements at each point.